Layering Ethics: Gender, Power, and Tech Justice in African AI

Part 7 of the "Beyond the Hype" series

After my session on AI’s physical supply chain and the invisible children mining cobalt in the DRC, Varaidzo Matimba returned to continue the Day 2 conversation on ethics.

Where I had focused on the material foundations (the minerals, the extraction, the environmental and human cost of AI hardware), Varaidzo brought a different but equally critical lens:

Who gets seen within the data?

Who holds power over the systems?

And how do gender, race, and colonial histories shape whose voices get heard in AI-driven evaluation?

These are different layers of ethics, both relevant and necessary for understanding what “responsible AI” needs to mean in African contexts.

Key Concepts

Varaidzo opened by grounding everyone in core concepts that would shape the session. She asked participants which terms they could confidently define: Decolonization, Made in Africa Evaluation, Safety by Design, and Afrofeminism.

Decolonization, she explained, involves the refusal of Western theories, adaptation for cultural relevance, and development of novel African-based methodologies. It means centering African people, drawing methodologies from African realities, and valuing African ways of knowing.

Made in Africa Evaluation builds on this; it’s “an effort at decolonising and indigenising evaluation practice in Africa” through the development of “new evaluation practices, theories, approaches and methodologies originating from African cultures, worldviews, knowledge systems, philosophies and African paradigms.”

These were the foundation for everything that followed.

A Made in Africa Example: Ushahidi

Before diving into problems, Varaidzo showed participants an example of what Made in Africa tech can look like.

Ushahidi was born during Kenya’s 2007-2008 post-election violence. It was a period of heightened tensions and media blackout. There was an urgent need for a platform that could aggregate and amplify citizen voices, providing critical information during a crisis.

The platform allowed individuals to report incidents of violence, share information, and contribute to a collective understanding of the situation using SMS, email, and social media. This was before smartphones were ubiquitous, the system worked with basic mobile phones and simple text messages.

This was citizen-generated evidence at scale. Communities became active participants in documenting what was happening, addressing challenges, and shaping responses.

Ushahidi has since been used for crisis mapping worldwide, including for disasters, elections, and humanitarian crises. It’s a genuine Made in Africa innovation that demonstrates what’s possible when technology is designed from and for African contexts.

Question for you: What makes Ushahidi different from imported tech solutions? Is it just that it was built by Africans, or is there something about how it was designed, the problems it prioritized, the technologies it used, the communities it centered—that makes it distinctly African?

Why Afrofeminism? Why Ethics?

Varaidzo then shifted to the lens that would frame the rest of her session: Afrofeminist tech ethics.

She quoted Sylvia Tamale: “Afrofeminism works to reclaim the rich histories of Black women in challenging all forms of domination, in particular as they relate to patriarchy, race, class, sexuality and global imperialism.”

This is beyond feminism applied to Africa. It touches a distinct intellectual tradition that recognizes how multiple systems of oppression intersect and compound; and how African women have always been at the forefront of challenging these systems.

But why does this matter for MERL practitioners working with AI?

Because AI can perpetuate existing power imbalances and exclusions; because the integration of Afrofeminist and decolonial perspectives offers transformative potential for more equitable and effective practice, and because these approaches are centered on lived realities and challenge the intersectional invisibility that happens in data collection and analysis.

Intersectional invisibility is the key phrase here. It’s not just that some groups are underrepresented in data. It’s that people at the intersection of multiple marginalized identities (African women, rural women, poor women, disabled women)become completely invisible. The data systems don’t even have categories to see them.

The Problems Varaidzo Identified

She laid out four interconnected problems with how AI is currently being integrated into MERL practice:

First, the invisibility of African women’s data in global datasets used to train evaluation AI systems. If AI learns from data that doesn’t include African women’s experiences, perspectives, and knowledge, then AI-powered evaluation tools will be blind to precisely the people who are often most affected by the programs being evaluated.

Second, the risk of algorithmic decision-making that further entrenches systematic exclusions and marginalization. AI doesn’t just reflect existing biases, it can amplify and automate them. When AI systems make recommendations about resource allocation, program design, or intervention targeting, they can systematically exclude the people who need support most.

Third, the extractive potential of AI-enabled MERL that harvests community data without a reciprocal benefit. Communities provide data through surveys, interviews, monitoring systems but the insights, the tools, the benefits flow elsewhere. The data extraction I discussed in my session (minerals from the ground) has a parallel in data extraction (information from communities).

Fourth, the tension between the technical complexity of AI systems and meaningful community participation in their design and governance. AI systems are technically sophisticated. That complexity can be used to justify keeping communities out of decision-making: “It’s too complicated, you wouldn’t understand.” But if communities can’t participate in designing and governing the AI systems that evaluate programs affecting them, then those systems will inevitably serve other interests.

Three Types of Harm

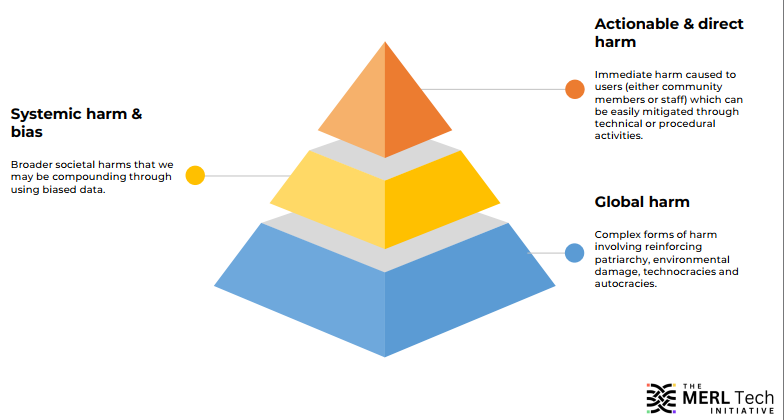

Varaidzo introduced a framework for thinking about AI harm that goes beyond individual privacy violations or data breaches.

Actionable and direct harm is immediate harm caused to users, either community members or staff, which can be relatively easily mitigated through technical or procedural activities. This includes things like privacy violations, incorrect information leading to bad decisions, or responses that lack local relevance and reinforce biases.

Systemic harm and bias refers to broader societal harms that we may be compounding through using biased data. This is about how AI systems can entrench existing inequalities at scale, not individual incidents, but patterns that affect entire communities or demographics.

Global harm involves complex forms of harm like reinforcing patriarchy, environmental damage, supporting technocracies and autocracies. These are the hardest to trace and address because they’re diffuse, long-term, and systemic.

She then broke down specific concerns within these categories:

Privacy: Users may not understand how their data is being used by commercial entities or NGOs.

Accuracy: Users may receive incorrect responses and make real-world decisions based on them, or spread misinformation.

Relevance and bias: Users may receive responses that aren’t strictly incorrect, but lack local relevance or reinforce gender norms and cultural biases.

Safeguarding: Users at acute risk of physical or emotional harm may not receive appropriate responses.

Global inequities: Users of AI tools with phone access may see their advantages increase compared to those without—especially affecting women who have less access to technology.

Workers rights: Staff at organizations working on fine-tuning AI may be exposed to traumatic content without adequate support.

Environmental harms: The commercial model powering AI takes vast amounts of energy, connecting back to the physical infrastructure I discussed in my session.

This framework is useful because it shows harm isn’t just one thing. We need different strategies for different types of harm. Technical fixes might address actionable harm. But systemic and global harm require deeper interventions, changes in power structures, not just better algorithms.

Evaluating for Tech Justice

Varaidzo then posed a challenge. We need new benchmarking frameworks that assess whether AI systems actually advance Afrofeminist and decolonial goals.

This isn’t about whether AI systems are “fair” by Western definitions of fairness but about developing metrics that measure:

Participatory ownership: Do communities have genuine control over AI systems affecting them, or just consultation rights?

Cultural responsiveness: Do AI systems recognize and respect diverse cultural contexts, or impose one-size-fits-all solutions?

Epistemic justice: Do AI systems validate diverse ways of knowing, or privilege only certain forms of knowledge?

These are fundamentally different questions from what most AI ethics frameworks ask. And they require evaluation approaches that center African perspectives from the start.

Decolonial Approaches: Two Key Questions

Varaidzo concluded with two questions for group discussion that were meant to guide practical work:

Who currently holds the power to design, deploy, and interpret AI and MERL technologies in African contexts, and how can this power be shifted toward communities?

This is a power mapping exercise. Not just “who uses these tools” but who controls them. Who decides what problems AI should solve? Who determines what data gets collected? Who interprets the results? Who benefits from the insights?

And critically: How can that power be shifted? Not requested politely, but actually transferred.

How can African feminist and intersectional perspectives be applied to ensure AI systems and evidence practices are inclusive, equitable, and responsive to marginalized voices?

This is about fundamentally rethinking what counts as evidence, whose voices shape evaluation questions, and how AI systems can be designed to see people at the intersections of multiple marginalized identities.

Question for you: If you were to do a power mapping of the AI and evaluation systems in your context, who would you find holds control? And what would it actually take to shift that power?

How These Layers Connect

My session focused on one layer of ethics: the material and environmental costs of AI, the human rights violations in supply chains, the communities bearing physical harm while AI development celebrates innovation.

Varaidzo’s session focused on another layer: the epistemological and social costs, the communities being erased from data, the power structures determining whose knowledge counts, the gender and intersectional dimensions of AI exclusion.

Both layers are essential.

You can’t have ethical AI without addressing the children mining cobalt. And you can’t have ethical AI without addressing the African women whose experiences are invisible to the systems, the communities whose knowledge gets extracted without reciprocity, the power structures that keep decision-making in distant boardrooms.

The physical extraction and the data extraction are parallel processes. The environmental harm and the epistemic harm compound each other. The lack of ownership over minerals and the lack of ownership over AI systems reflect the same structural problem.

What This Means for Practice

If we take both layers seriously, what changes?

For evaluation practice: We need to assess not just program outcomes, but the tools we use to assess them. Where did these tools come from? Whose knowledge do they recognize? Who controls them? What’s their supply chain, both physical and epistemological?

For AI development: We need to center communities from the start. Not just in data collection, but in design, governance, benefit-sharing, and power. And we need to trace the full impact from minerals to models to social effects.

For MERL tech selection: We need criteria that go beyond “does it work?” to ask “who does it work for? Who does it harm? Whose power does it reinforce? What would an Afrofeminist, decolonial alternative look like?”

For institutional practice: We need new benchmarking frameworks that measure participatory ownership, cultural responsiveness, and epistemic justice not just efficiency, scalability, and cost-effectiveness.

The Ushahidi Question Revisited

Coming back to Ushahidi, why does it matter as an example?

Because it demonstrates that Made in Africa tech is possible. That citizen-generated evidence can work at scale. That communities can be active participants, not just data sources.

But Ushahidi also raises questions:

Has it maintained its community-centered origins as it scaled globally?

Who controls it now? Whose crisis mapping needs does it prioritize?

Has success required compromises with the extractive models Varaidzo critiqued?

I don’t know the answers but the questions matter because they show that even genuinely innovative, community-rooted technology can be pulled back into extractive patterns if we’re not vigilant about power and ownership.

The Afrofeminist lens Varaidzo brought asks us to always check: Who holds power? Who benefits? Who’s invisible? And most importantly how do we shift this?

Two Frameworks, One Goal

By the end of Day 2 morning sessions, participants had two frameworks to work with:

From my session: African-Centred Critical Evaluation of AI, with its five pillars including upstream impact tracing from minerals to social outcomes.

From Varaidzo’s session: Afrofeminist tech ethics and decolonial approaches that center power analysis, intersectional invisibility, and epistemic justice.

These frameworks are addressing different dimensions of the same structural problem. Mine asks: What is AI made of, and who pays the price? Varaidzo’s asks: Who gets seen in AI systems, and who holds the power?

Together, they create a fuller picture of what ethical AI in African contexts actually requires. Not just better algorithms or cleaner supply chains (though we need those). But fundamental shifts in power, ownership, and whose intelligence gets recognized and valued.

What I’m Sitting With

After both sessions, I found myself thinking about how these layers compound.

The children mining cobalt in the DRC are invisible to AI supply chain narratives. African women’s experiences are invisible to AI training data. Both invisibilities serve the same function, they allow extraction to continue without accountability.

The lack of community power over mining operations mirrors the lack of community power over AI systems. Both are justified through technical complexity: “You wouldn’t understand the global mineral market.” “You wouldn’t understand the algorithms.”

The environmental harm and the epistemic harm both stem from treating Africa as a source of raw materials, whether physical minerals or raw data to be extracted, processed elsewhere, and sold back at premium prices.

Varaidzo’s Afrofeminist lens shows how gender compounds all of this. African women are least likely to have smartphones, most likely to be invisible in datasets, least likely to hold power in tech companies or mining operations, most likely to bear the environmental and social costs of extraction.

When we talk about “responsible AI,” both layers need to be part of the conversation. Not one or the other. Both.

Next week: The afternoon sessions from Day 2, where we dove into specific risks, ethical concerns, and practical actions.

Thank you for reading!

Support this work

This work exists to break down complexity in tech and amplify African innovation for global audiences. Your support keeps it independent and community-rooted.

Your support is appreciated!