AI Ethics and Personal Boundaries

Part 8 of the “Beyond the Hype” series

By the afternoon of Day 2, workshop participants had been sitting with heavy material. My session on AI’s physical supply chains and the children mining cobalt in the DRC. Varaidzo’s session on Afrofeminist tech ethics, power imbalances, and epistemological erasure.

Both sessions focused on structural problems (systems of extraction, patterns of exclusion), frameworks that need fundamental rethinking.

Karen Smiley’s session brought a different lens: What can you actually do about this? Tomorrow morning, when you sit down to work, what choices do you have?

This was the individual, practical layer of ethics. And it started with a question that grounded everything that followed.

Starting With Values

Before diving into risks or actions, Karen asked participants to do something personal: identify 3-5 core values and prioritize them.

Not organizational values. Not sector best practices. Your values. What matters to you? What guides your decisions when things get complicated?

She offered starting points, the philosophy of Ubuntu, principles from the South African Constitution, the eight values many South Africans share according to Heartlines research (accepting difference, responsibility, forgiveness, perseverance, self-control, honesty, compassion, second chances).

But the exercise was individual. Write down what you value. Put them in order. Because the AI usage policy you’d create later in the session needed to be rooted in something real, not just compliance or best practices, but actual values you’re willing to defend.

This matters because when you face decisions about AI use, whether to use a tool, how to use it, or what data to share, having articulated values gives you a foundation to decide from, not just react to.

Question for you: If you had to name your top 3-5 values right now, what would they be? And how do they shape how you think about using AI in your work?

Five Everyday AI and Data Risks

Karen then walked through five practical risks that affect people using AI tools, especially in MERL work:

Risk 1: Legal Alignment and Copyright Infringement

AI tools are facing dozens of copyright lawsuits globally. When you use AI-generated content, you’re potentially exposed to copyright issues, unexplainable decisions influenced by biased training data, liability for sharing inaccuracies, and you may not even be able to copyright your own AI-assisted work.

Risk 2: Security and Confidentiality

What you put into generative AI tools often lacks privacy or confidentiality protections, especially with free accounts. Data collected from users can feed into the AI platform and “leak” back out in someone else’s chat. Data breaches are common. “Anonymous” data often isn’t, it can be reverse-engineered. And both ChatGPT and Grok exposed personal chats via Google Search in July 2025.

For MERL practitioners: If you’re putting beneficiary data, community information, or program details into AI tools, you may be exposing confidential information without realizing it.

Risk 3: Workforce Impact

AI can perpetuate historical biases in HR and management decisions, with legal risks from unexplainable AI-advised decisions. There’s weak support for languages and cultures other than Western English. Poor support for people with disabilities. Women are disproportionately affected—they express more ethical concerns about AI and are judged more harshly for using it. There are unrealistic productivity expectations creating technostress, and weakened trust of managers who overuse AI for messaging.

Risk 4: Branding Confusion

When everyone is using the same AI tools to generate content, uniqueness suffers; all outputs draw from the same well. There are ownership rights issues where the AI tool company may retain ownership of what you create. And you need to check terms and conditions for licensing rights.

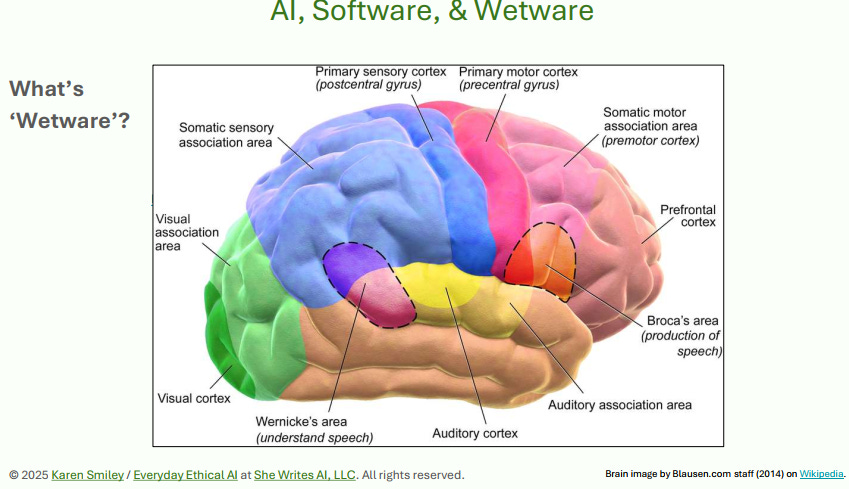

Risk 5: What Happens to Our Wetware (Brains)?

Karen used “wetware” to mean our human brains and asked what happens to our thinking when we rely heavily on AI. There are learning impacts, both positive and negative, for teachers and students. Productivity and quality changes, helpful for neurodivergence and second language speakers, but risks of creating “slop” and becoming dependent on “cognitive prosthetics.”

Question for you: Which of these five risks resonates most with your experience? Have you noticed changes in your own thinking or work patterns since you started using AI tools?

Five Steps in the AI Ecosystem, Five Ethical Concerns

Karen then mapped ethical concerns onto different stages of the AI ecosystem, from data centers to model building to actual tool usage.

Concern 1: Adverse Environmental Impacts (Data Centers)

More data and more AI algorithms require more data centers. In construction, rare earth minerals like cobalt, coltan, and lithium are consumed. Hazardous wastes like lead and mercury are created. Mining these minerals often involves exploitation of workers and resources in the Global South.

This is where Karen’s material connects directly to what I covered earlier. The cobalt and coltan she mentioned in data centers, those are the same minerals I traced to children mining in the DRC. Karen’s framing emphasized environmental impact and energy consumption. Mine traced the specific human cost in specific places. Both layers matter.

In operation, massive data centers use enormous quantities of power and water and increase CO2 emissions. Both training AI models and running them (inferencing) matter, with inferencing accounting for 80-90% of ongoing energy use. Data centers are even competing directly with agricultural water needs in some areas.

Concern 2: Unethical Data Sourcing (Training Data)

AI tools need data, and most data comes from humans. Many AI companies don’t source their data equitably. Data dignity is weak or lacking. The 3Cs Rule (Consent, Credit, Compensation) is rarely followed. Taking copyrighted “publicly available” data that isn’t public domain is stealing.

AI companies claim it’s impossible to build well-performing large language models without this stolen data. The Common Pile v0.1 project proved them wrong by building a dataset entirely from ethically sourced, properly licensed content.

Karen also noted that polluted datasets “poison the well”, if training data includes biases, errors, or harmful content, the AI inherits those problems. And there are concerns about EdTech relationships with data brokers who sell student data.

Concern 3: Exploitation of Data Workers (Data Processing)

Data needs to be cleaned and labeled “enriched” so it can be used in AI training and content moderation. This labeling work is tedious and can be emotionally stressful. Some workers have sued and won PTSD claims from being exposed to traumatic content during moderation work.

Some large employers use unfair labor practices, withholding wages unreasonably or underpaying workers in Africa (39 nations), South America, and Asia. “Ethics dumping” and “ethics washing” are common, companies outsourcing the harmful work while claiming ethical practices. Labelers’ intelligence is dismissed or denied. Harms are deeper in the Global South.

This connects to Varaidzo’s point about extractive AI-enabled MERL. The data extraction isn’t just from communities being evaluated, it’s also from workers processing that data under exploitative conditions.

Concern 4: Harmful Model Biases (Model Building)

Biases permeate society and show up in data. Using that data without care for training AI can perpetuate and worsen biases. Datasets used by AI companies tend to be strongly US/Western English-centric. Diverse teams do better at reducing biases, but most AI teams aren’t diverse. Poorer service and measurable harms have come to underrepresented groups based on gender, race, age, and other biases.

Concern 5: Impacts on Lives and Livelihoods (AI Tool Usage)

AI tools can deliver benefits but some, especially generative AI, are disruptive to livelihoods and careers. This is happening first for creative people, but no job is safe. AI companies using models trained on stolen content compete directly against the people they stole from, what Karen called “socializing the costs and privatizing the profits.”

Examples include translators, voice artists, musicians, writers, software developers, and customer service workers. Deepfakes and irresponsible chat tools have harmed and killed children.

Five Suggested Actions

After laying out risks and concerns, Karen offered practical actions individuals can take:

Action 1: Choose Your AI Tools Wisely

Find out how and where your data is already being used, on the job, at school, in government, in your home. Look for responsible AI certifications like ISO 42001 or Fairly Trained. Do your homework on AI tool providers and “follow the money” on hype. Look for companies that proactively address biases.

Action 2: Protect Your Data

Avoid oversharing kids’ information online. Consider “poisoning” images you post (adding imperceptible changes that make them harder for AI to scrape and use). Be cautious with medical, financial, or confidential personal data. Define a family code word to protect against deepfakes. Build your family’s AI literacy. Look for new tools that use in-device or edge AI rather than sending data to cloud servers.

Action 3: Use Generative AI Tools Wisely

Look for non-AI alternatives first. Try prompting frameworks to get results quicker, but monitor your usage. Always verify references and information from AI tools. Stay alert for biases in outputs. Avoid AI-shaming people whether they use AI or don’t; save criticism for unethical companies. Avoid creating “AI slop”, low-quality, generic AI-generated content that clutters the information ecosystem.

Action 4: Seek Out Diverse Voices About AI

In workplaces, push for diverse hiring and inclusion in product development. In projects, advocate for closed-loop monitoring. In schools, ensure policies are inclusive of people of all genders, people with disabilities, second languages, or neurodivergence. Keep learning from diverse perspectives.

Action 5: Define Your Own AI Usage Policy

We all have an AI usage policy, it’s just probably not written down. So write it down. List and prioritize your values. List your tasks and map them to boundaries. What do you do now with AI? What don’t you do yet? What won’t you ever do with AI? Why or why not, and which values guide those decisions? Share it (be transparent) and evolve it over time.

The Human/AI Boundary Map

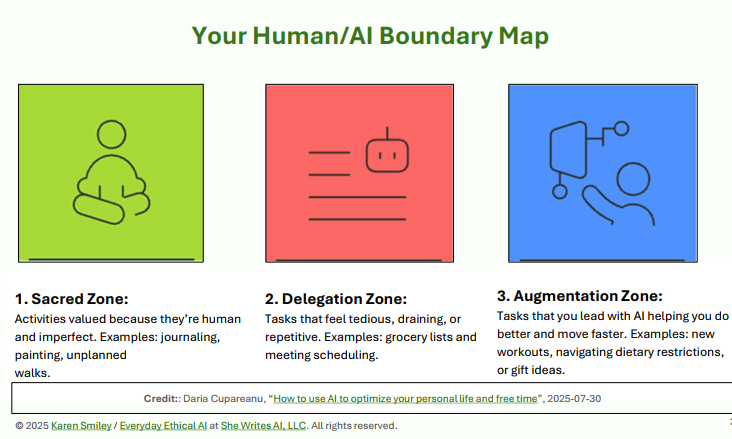

Karen then introduced a practical framework for thinking about where AI fits in your work: the Human/AI Boundary Map, with three zones developed by Daria Cupareanu.

The Sacred Zone: Activities you value because they’re human and imperfect. Things you wouldn’t want AI to do even if it could. Examples might include journaling, certain creative work, unplanned conversations, relationship-building.

The Delegation Zone: Tasks that feel tedious, draining, or repetitive. Things you’re happy to hand off to AI if it can do them competently. Examples might include scheduling, formatting documents, generating meeting summaries, creating grocery lists.

The Augmentation Zone: Tasks where you lead but AI helps you do better and move faster. You’re still driving, but AI augments your capabilities. Examples might include research, brainstorming, learning new skills, analyzing patterns in data.

The boundaries between these zones are personal. They depend on your values, your skills, your context, and your comfort level. And they can shift over time as both you and the technology change.

The Guided Writing Exercise

Karen then gave participants 15 minutes for a guided writing exercise: draft your own AI usage policy.

Using the values they’d identified at the start, participants mapped their daily MERL tasks onto the boundary zones:

Tasks they purposely use AI for now, and why

Tasks they would like to use AI for, and why

Tasks they would not use AI for, and why

Then they looked for connections between their choices and their values, noting any areas of friction or tension.

After writing individually, participants discussed their draft policies and shared them.

This exercise matters because it forces you to articulate boundaries before you need them in the moment. When you’re under deadline pressure or facing a difficult task, having a pre-defined policy helps you make decisions aligned with your values rather than just taking the path of least resistance.

About Karen’s Layer

Karen’s session was practical, actionable, and necessary. Participants left with concrete tools a boundary map, a draft policy, specific actions they could take starting the next day.

Individual ethical choices matter. Being thoughtful about which AI tools you use, protecting sensitive data, and defining clear boundaries, these are real practices that reduce real harms. But you might be wondering:

Are these sufficient?

Karen advised choosing AI tools with responsible certifications like Fairly Trained or ISO 42001. That’s good guidance. But as my framework showed, even “fairly trained” models run on servers built with minerals extracted under exploitative conditions. Even “responsible” AI companies participate in supply chains powered by child labor. You can be extremely careful about which tools you use and still be participating in these exploitative systems.

Karen’s advice to protect your data and avoid oversharing is wise. But the extractive potential Varaidzo identified (AI-enabled MERL that harvests community data without reciprocal benefit) isn’t solved by individual data protection. It’s a structural problem about who controls systems and who benefits from them.

The boundary map exercise asks “What tasks will I use AI for?” But it doesn’t ask “Who mined the cobalt that powers this AI?” or “Whose knowledge was erased to make this AI’s knowledge seem universal?”

This is a reflection on positioning, so we’re clear on what individual ethics can and can’t do.

Three Layers, All Necessary

By the end of Day 2, participants had three distinct but complementary layers of ethics to work with:

My layer: Material and environmental ethics

What is AI made of?

Who pays the physical price?

How do we trace upstream impacts from minerals to models?

Varaidzo’s layer: Epistemological and power ethics

Who gets seen in AI systems?

Who holds power over design and governance?

How do gender, race, and colonial histories shape whose voices matter?

Karen’s layer: Practical and individual ethics

What choices do I have right now?

How do I navigate systems I can’t fully escape?

What boundaries align with my values?

All three layers are essential. You can’t address AI ethics without considering the physical supply chains. You can’t address it without examining power and epistemology. And you can’t address it without giving people practical tools to make better decisions in their daily work.

However, the individual layer is where most ethics conversations often stop. We get personal AI policies, organizational guidelines, individual tool choices. We feel like we’re being responsible. Meanwhile, the structural problems such as the children mining cobalt, the communities whose knowledge gets extracted, the power imbalances determining who benefits, continue unchanged.

Personal ethical choices are a floor, not a ceiling. They’re what we do while we’re also working on structural change. They’re how we reduce harm in the immediate term while building toward systemic transformation. If we stop at personal policies and think we’ve solved the ethics problem, we’ve just made extraction more comfortable for ourselves while leaving the foundations unchanged.

The Values Exercise Revisited

This is why I keep thinking about how Karen started, with that values exercise.

On one level, it was setting up the personal AI policy work. Your values guide your boundaries. But on another level, articulating your values could be a foundation for something more than individual navigation. It could be a starting point for collective action.

If a group of evaluators all identified “justice” or “dignity” or “community benefit” as core values, and then looked honestly at the AI systems they’re using, what would they see? What would they refuse to participate in? What would they demand change? Personal values, articulated clearly and held collectively, could become the basis for pushing back against extractive systems rather than just navigating them more carefully.

So, do we stop at individual boundaries or use those boundaries to identify where collective action is needed?

What This Means for Practice

If we take all three layers seriously (material, epistemological, and individual), what actually changes?

You define personal AI usage policies (Karen’s layer). You choose tools more carefully, protect sensitive data, set clear boundaries.

And you ask where those tools came from, who mined the materials, what the supply chain looks like (my layer).

And you examine who holds power over the tools, whose knowledge they recognize, who benefits and who’s erased (Varaidzo’s layer).

And you recognize that your individual choices, while necessary, aren’t sufficient. You need collective action, structural change, and willingness to refuse participation in systems that can’t be made ethical through better individual choices alone.

This is harder than just having a personal AI policy. It requires holding multiple tensions at once:

Use tools thoughtfully AND question the systems producing those tools

Make individual ethical choices AND work for structural change

Navigate current reality AND push for different futures

But maybe that’s what ethical practice actually looks like in a compromised world. Not purity. Not perfect solutions. But clear-eyed engagement with all 3 layers and refusal to let any one layer let us off the hook for the others.

Do you have a personal AI usage policy, written or unwritten? What values guide your boundaries? And what would it take to move from individual ethical choices to collective action for structural change?

Next in the series: Closing reflections from the workshop as we transition into “What AI Teaches Us About Ourselves.” A session I hosted at OFU 2025 (Online Facilitation Unconference).

Thank you for reading!

Support this work

Your support keeps it independent and community-rooted.

Your support is appreciated!